- PrivaceraCloud Release 7.4

- Enhancements and updates in PrivaceraCloud release 7.4

- Known Issues in PrivaceraCloud 7.4

- PrivaceraCloud User Guide

- Overview of PrivaceraCloud

- Connect applications with the setup wizard

- Connect applications

- About applications

- Connect Azure Data Lake Storage Gen 2 (ADLS) to PrivaceraCloud

- Connect Amazon Textract to PrivaceraCloud

- Athena

- Privacera Discovery with Cassandra

- Connect Databricks to PrivaceraCloud

- Databricks SQL

- Databricks SQL Overview and Configuration

- Planning and general process

- Prerequisites

- Databricks SQL with Privacera Hive

- Connect Databricks SQL application

- Grant Databricks SQL permissions to PrivaceraCloud users

- Define a resource policy

- Test the policy

- Databricks SQL PolicySync fields

- Configuring column-level access control

- View-based masking functions and row-level filtering

- Create an endpoint in Databricks SQL

- Databricks SQL Fields

- Databricks SQL Hive Service Definition

- Databricks SQL Masking Functions

- Databricks SQL Encryption

- Use a custom policy repository with Databricks

- Connect Databricks SQL to Hive policy repository on PrivaceraCloud

- Databricks SQL Overview and Configuration

- Connect Databricks Unity Catalog to PrivaceraCloud

- Connect S3 to PrivaceraCloud

- Prerequisites in AWS console

- Connect S3 application to PrivaceraCloud

- Enable Privacera Access Management for S3

- Enable Data Discovery for S3

- S3 AWS Commands - Ranger Permission Mapping

- S3

- AWS Access with IAM

- Access AWS S3 buckets from multiple AWS accounts

- Add UserInfo in S3 Requests sent via Dataserver

- Control access to S3 buckets with AWS Lambda function on PrivaceraCloud

- Dremio Plugin

- DynamoDB

- Connect Elastic MapReduce from Amazon application to PrivaceraCloud

- Connect EMR application

- EMR Spark access control types

- PrivaceraCloud configuration

- AWS IAM roles using CloudFormation setup

- Create a security configuration

- Create EMR cluster

- How to configure multiple JSON Web Tokens (JWTs) for EMR

- EMR Native Ranger Integration with PrivaceraCloud

- Connect EMRFS S3 to PrivaceraCloud

- Files

- GBQ

- Google Cloud Storage

- Connect Glue to PrivaceraCloud

- Google BigQuery for PolicySync

- Connect Kinesis to PrivaceraCloud

- Connect Lambda to PrivaceraCloud

- Microsoft SQL Server

- MySQL for Discovery

- Open Source Apache Spark

- Oracle for Discovery

- PostgreSQL

- Connect Power BI to PrivaceraCloud

- Presto

- Redshift

- Snowflake

- Starburst Enterprise with PrivaceraCloud

- Starburst Enterprise Presto

- Trino

- Connect users

- Data access Users, Groups, and Roles

- UserSync

- Portal user LDAP/AD

- Datasource

- Okta Setup for SAML-SSO

- Azure AD setup

- SCIM Server User-Provisioning

- User Management

- Identity

- Access Manager

- Access Manager

- Resource Policies

- Tag Policies

- Scheme Policies

- Service Explorer

- Reports

- Audit

- About data access users, groups, and roles resource policies

- Security zones

- Discovery

- Classifications via random sampling

- Privacera Discovery scan targets

- Propagate Privacera Discovery Tags to Ranger

- Enable offline scanning on Azure Data Lake Storage Gen 2 (ADLS)

- Enable Real-time Scanning of S3 Buckets

- Enable Real-time Scanning on Azure Data Lake Storage Gen 2 (ADLS)

- Enable Discovery Realtime Scanning Using IAM Role

- Encryption

- Overview of Privacera Encryption

- Encryption schemes

- Presentation schemes

- Masking schemes

- Create scheme policies

- Privacera-supplied encryption schemes for the Privacera API

- Privacera-supplied encryption schemes for the Bouncy Castle API

- API date input formats

- Deprecated encryption formats, algorithms, and scopes

- Privacera Encryption REST API

- PEG API endpoint

- PEG REST API encryption endpoints

- Prerequisites

- Common PEG REST API fields

- Construct the datalist for the /protect endpoint

- Deconstruct the response from the /unprotect endpoint

- Example data transformation with the /unprotect endpoint and presentation scheme

- Example PEG API endpoints

- Make encryption API calls on behalf of another user

- Privacera Encryption UDF for masking in Databricks on PrivaceraCloud

- Privacera Encryption UDFs for Trino on PrivaceraCloud

- Syntax of Privacera Encryption UDFs for Trino

- Prerequisites for installing Privacera Crypto plug-in for Trino

- Download and install Privacera Crypto jar

- Set variables in Trino etc/crypto.properties

- Restart Trino to register the Privacera encryption and masking UDFs for Trino

- Example queries to verify Privacera-supplied UDFs

- Privacera Encryption UDF for masking in Trino on PrivaceraCloud

- Encryption UDFs for Apache Spark on PrivaceraCloud

- Launch Pad

- Settings

- Dashboard

- Usage statistics

- Operational status of PrivaceraCloud and RSS feed

- How to Get Support

- Coordinated Vulnerability Disclosure (CVD) Program of Privacera

- Shared Security Model

- PrivaceraCloud Previews

- Preview: File Explorer for S3

- Preview: File Explorer for Azure

- Preview: File Explorer for GCS

- Preview: Scan Generic Records with NER Model

- Preview: Scan Electronic Health Records with NER Model

- Preview: OneLogin setup for SAML-SSO

- Preview: Azure Active Directory SCIM Server UserSync

- Preview: OneLogin UserSync

- Preview: PingFederate UserSync

- Quickstart for Databricks Unity Catalog on PrivaceraCloud

- What do I need to do in my Databricks Workspace?

- Where is the sample dataset in my Databricks Workspace?

- What should I do in the PrivaceraCloud web portal?

- Access use-case - How do I give a user access to a table or restrict from running a SQL select query?

- Access use-case - How do I restrict a user from seeing contents of a column in the result of a SQL select query?

- Column masking use-case - How do I restrict a user from seeing contents of a column by masking the values in the result of a SQL select query?

- Access use-case - How do I disallow a user from seeing certain rows of a table?

- PrivaceraCloud documentation changelog

Databricks SQL Encryption

The following steps enable use of Privacera encryption services in a Databricks SQL notebook:

Create a secret shared by Privacera Encryption Gateway (PEG) and Databricks.

Create Resource Policies in Privacera for data access to Databricks SQL resources.

Create Privacera encryption and decryption User-Defined Functions (UDFs) in Databricks.

For more information about Privacera encryption schemes, see the Privacera Encryption Guide.

Prerequisites

A working Databricks SQL installation connected to PrivaceraCloud. See Databricks to learn more.

Databricks CLI installed to your client system and configured to attach to your Databricks host. See Databricks Documentation: Databricks CLI and Databricks Documentation: Authenticating using Databricks personal access tokens.

Privacera Encryption Gateway (PEG) enabled and configured in your account settings. See About Account.

Grant permission in encryption scheme policy

To use Databricks SQL encryption, you must create a scheme policy for a user that will use the Databricks UDF. This scheme policy must grant the getSchemes permission. See Create Scheme Policies on PrivaceraCloud to learn more.

Configure Databricks

With the Databricks CLI:

Create a secret scope called

privaceracloud:databricks secrets create-scope --scope privaceracloud

Add secrets to this scope:

peg_username,peg_password, andpeg_secretare literals and should be entered exactly as shown.The

<username>,<password>, and<sharedsecret>values below are the same as what you entered in PrivaceraCloud when adding the PEG service. See API Key to learn more.databricks secrets put --scope privaceracloud --key peg_username --string-value <username> databricks secrets put --scope privaceracloud --key peg_password --string-value <password> databricks secrets put --scope privaceracloud --key peg_secret --string-value <sharedsecret>

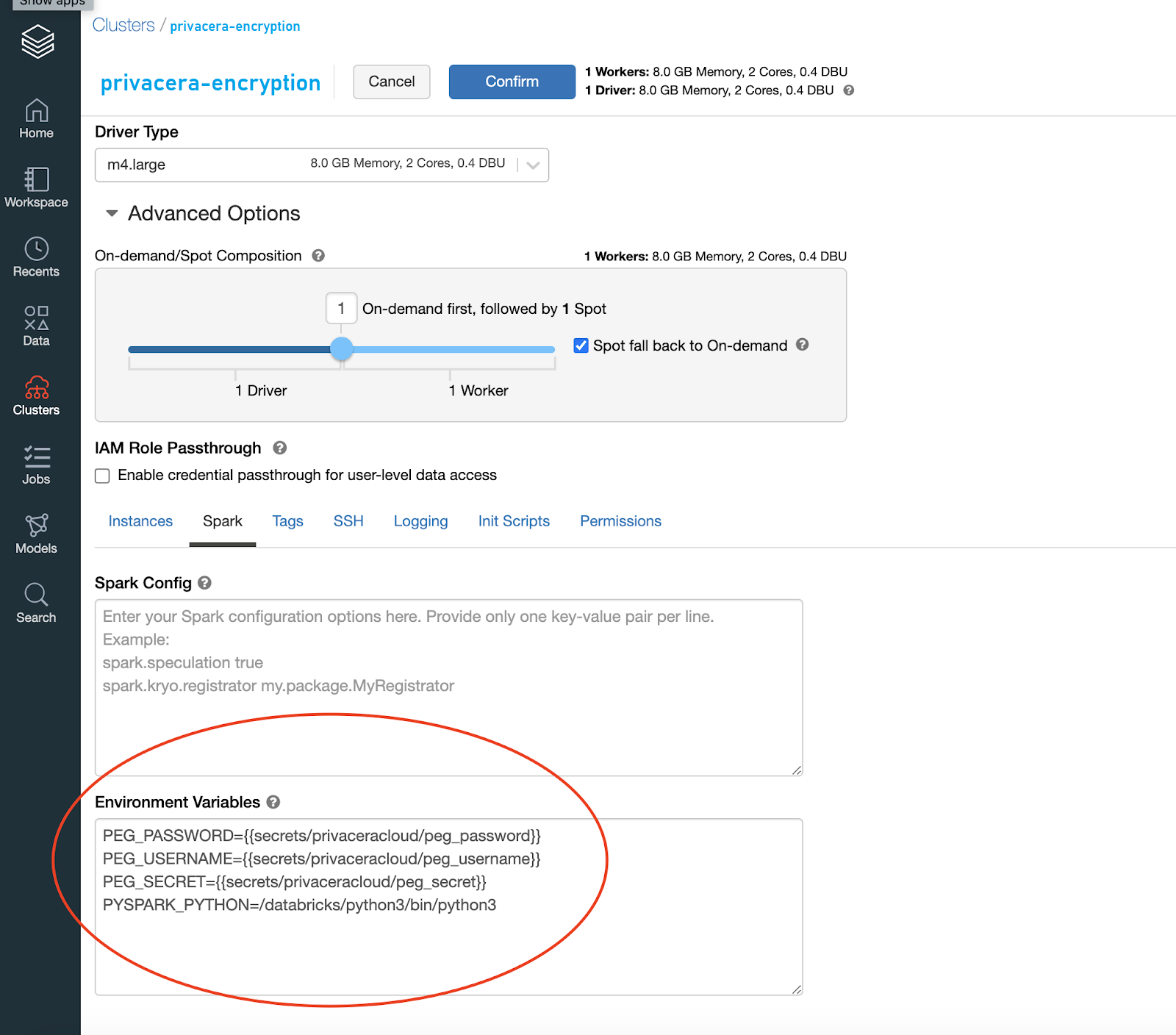

Add the following environment variables in your Databricks cluster:

PEG_SECRET={{secrets/privaceracloud/peg_secret}}

PEG_PASSWORD={{secrets/privaceracloud/peg_password}}

PEG_USERNAME={{secrets/privaceracloud/peg_username}}Caution

Note that there can be existing environment variables. Do not remove these.

First log into Databricks, create a notebook, and set the language to SQL.

Run the following SQL commands in Databricks to create UDFs for Privacera encryption services, named protect and unprotect.

Note

com.privacera.crypto functions enable use of encryption schemes, but do not accept presentation schemes.

Create Privacera

protectUDF:create database if not exists privacera; use privacera; drop function if exists privacera.protect; CREATE FUNCTION privacera.protect AS com.privacera.crypto.PrivaceraEncryptUDF'

Create Privacera

unprotectUDF:use privacera; drop function if exists privacera.unprotect; CREATE FUNCTION privacera.unprotect AS com.privacera.crypto.PrivaceraDecryptUDF'

Configure Privacera resource policies

Databricks SQL resources are managed under Access Manager > Resource Policies > privacera_hive.

To add resource policies to allow access to selected resources:

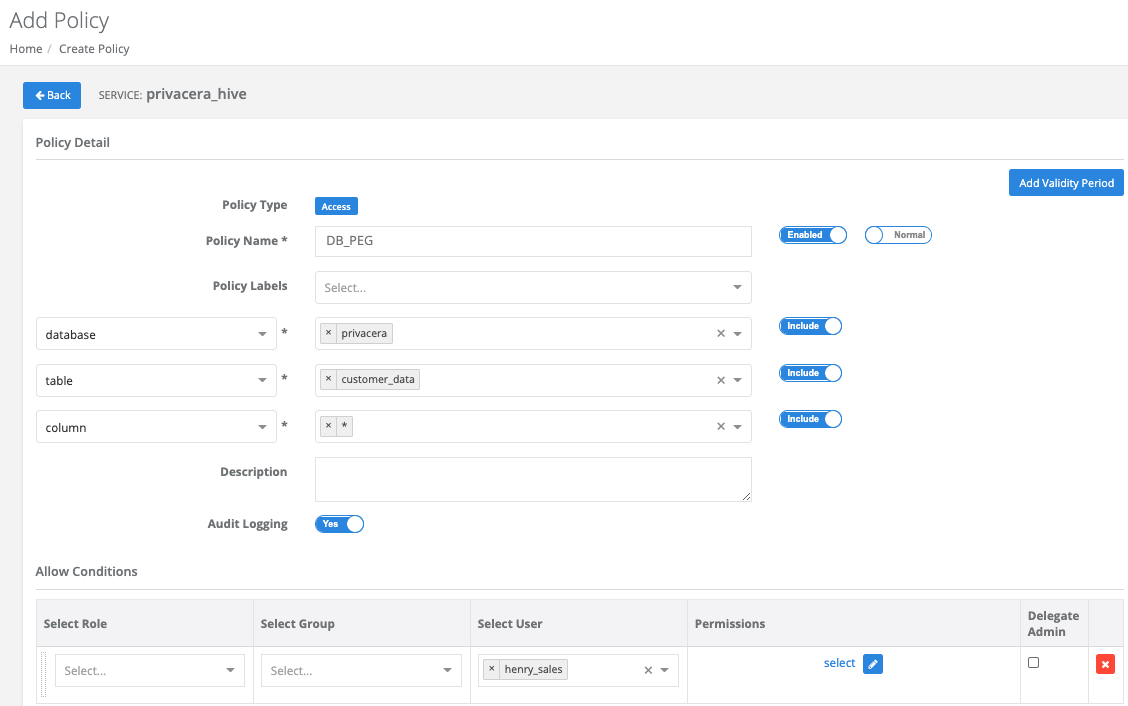

Create a policy to give data access users, groups, or roles the

selectprivilege to target database resources. On the Add Policy page, under Allow Conditions use Select Role, Select Group and/or Select User then under Permissions chooseselect.For example:

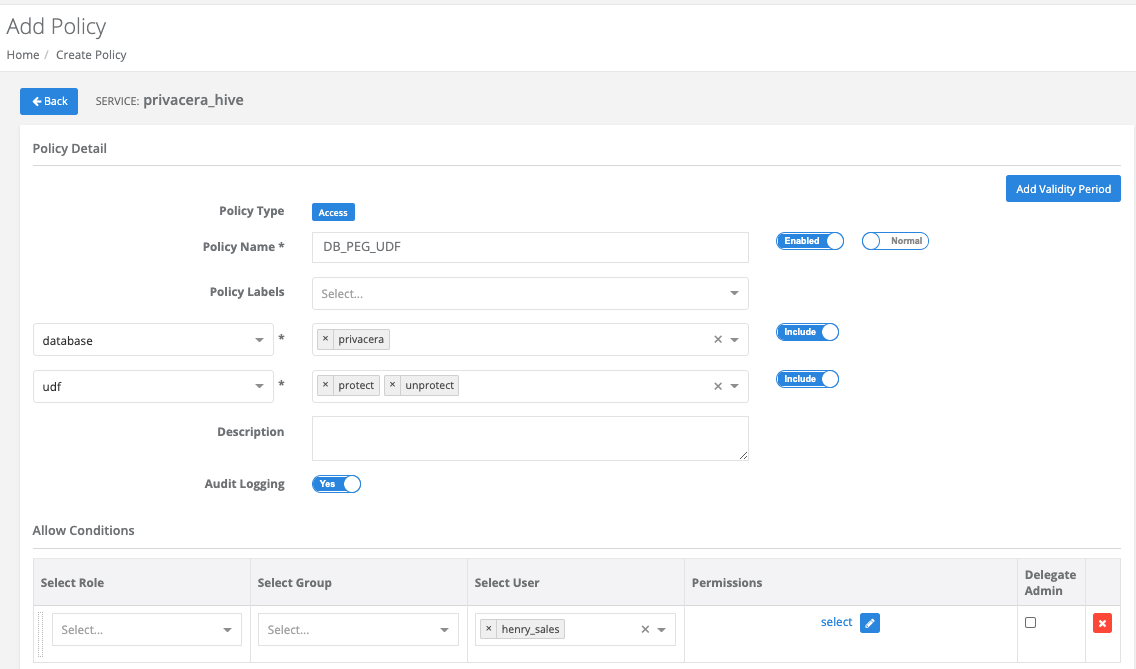

Create a policy to grant data access users, groups, or roles the

selectprivilege to the protect and unprotect UDFs. On the Add Policy page, under Allow Conditions use Select Role, Select Group and/or Select User then under Permissions chooseselect.For example:

How to use UDFs in SQL to encrypt and decrypt

The following are SQL command examples for privacera.protect (encrypt) and privacera.unprotect (decrypt) UDFs:

select privacera.protect(<COLNAME>,'<ENCRYPTION_SCHEME_NAME>') from <DB_NAME>.<TABLE_NAME>;

<COLNAME>is the identifier of the column to encrypt.<ENCRYPTION_SCHEME_NAME>is the name of the chosen Privacera encryption scheme.<DB_NAME>.<TABLE_NAME>are the names of the database and table in that database.

Example

In this example, the email column of the bigdatabase.customer_data table is encrypted with the SYSTEM_EMAIL encryption scheme.

select privacera.protect(email, \'SYSTEM\_EMAIL\') from bigdatabase.customer\_data;

select privacera.unprotect(<COLNAME>,'<ENCRYPTION_SCHEME_NAME>') from <DB_NAME>.<TABLE_NAME>;

<COLNAME>is the identifier of the column to decrypt.<ENCRYPTION_SCHEME_NAME>is the name of the chosen Privacera encryption scheme, which must be the same encryption scheme used to originally encrypt.<DB_NAME>.<TABLE_NAME>are the names of the database and table in that database.

Example

In this example, the email column of the bigdatabase.customer_data table is decrypted with the SYSTEM_EMAIL encryption scheme.

select privacera.unprotect(email, 'SYSTEM_EMAIL') from bigdatabase.customer_data;

The unprotect UDF supports an optional specification of a presentation scheme that further obfuscates the decrypted data.

For an example of data transformation with the optional presentation scheme, see Example of Data Transformation with /unprotect and Presentation Scheme..

Example query:

select id, privacera.unprotect(<COLUMN_NAME>, <ENCRYPTION_SCHEME_NAME>, <PRESENTATION_SCHEME_NAME>) <OPTIONAL_NAME_FOR_COLUMN_TO_WRITE_OBFUSCATED_OUPUT> from <DB_NAME>.<TABLE_NAME>;

<PRESENTATION_SCHEME_NAME>is the name of the chosen Privacera presentation scheme with which to further obfuscate the decrypted data.<OPTIONAL_NAME_FOR_COLUMN_TO_WRITE_OBFUSCATED_OUTPUT>is a "pretty" name for the column that the obfuscated data is written to.Other arguments are the same as in the preceding

unprotectexample.